IBM open-sources its Granite AI models - and they mean business

Many companies claim to have open-sourced their LLMs, but IBM actually did it. Here's how.

Many companies claim to have open-sourced their LLMs, but IBM actually did it. Here's how.

Say goodbye to restrictive AI models and hello to a future where you choose the intelligence of your devices.

Most Americans would rather read a history book than analyze their personal data - and these three data types are the most intimidating.

A smaller model isn't necessarily a bad thing.

A new 'Makerspace' partnership at Georgie Tech hopes to prep the next generation of AI builders with Nvidia's cutting-edge computing power.

Half a neural network can be ripped away without affecting performance, thereby saving on memory needs. But there's bad news, too.

Two new large language models, Jamba and DBRX, dramatically reduce the compute and memory needed for predictions, while meeting or beating the performance of top models such as GPT-3.5 and Llama 2.

With IBM and Google Cloud enlisted as partners, the AI Institute pledges to ensure accountability across various sectors - from healthcare and education to finance and manufacturing.

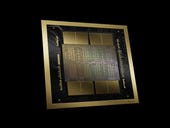

The successor to Nvidia's 'Hopper' GPU design is the 'Blackwell' chip family, which more than doubles the number of transistors and multiplies floating-point calculations several fold, to speed up large language models by 25 times.

Arc Search offers several cool tricks to help you with your web queries on iOS. But one tool in particular shines above the rest.

Google finally launches its Find My Device network. Here are the Android models that support it

Google finally launches its Find My Device network. Here are the Android models that support it

One of the best Android watches I've tested is not made by Google or Samsung

One of the best Android watches I've tested is not made by Google or Samsung

3 million smart toothbrushes were not used in a DDoS attack after all, but it could happen

3 million smart toothbrushes were not used in a DDoS attack after all, but it could happen

Raspberry Pi 4 Model B review: A capable, flexible and affordable DIY computing platform

Raspberry Pi 4 Model B review: A capable, flexible and affordable DIY computing platform

Which Microsoft Surface PC is right for you?

Which Microsoft Surface PC is right for you?

Inside a fake $20 '16TB external M.2 SSD'

Inside a fake $20 '16TB external M.2 SSD'